All published articles of this journal are available on ScienceDirect.

Medical Images Fusion with Patch Based Structure Tensor

Abstract

Nowadays medical imaging has played an important role in clinical use, which provide important clues for medical diagnosis. In medical image fusion, the extraction of some fine details and description is critical. To solve this problem, a modified structure tensor by considering similarity between two patches is proposed. The patch based filter can suppress noise and add the robustness of the eigen-values of the structure tensor by allowing the use of more information of far away pixels. After defining the new structure tensor, we apply it into medical image fusion with a multi-resolution wavelet theory. The features are extracted and described by the eigen-values of two multi-modality source data. To test the performance of the proposed scheme, the CT and MR images are used as input source images for medical image fusion. The experimental results show that the proposed method can produce better results compared to some related approaches.

1. INTRODUCTION

In the resent years, medical imaging plays an important role in various applications in clinical use, such as pathology analysis, clinical diagnosis or healing examinations etc. In view of the characteristic of medical image, we must get good quality image and complete relative medical image to ensure the diagnostic correctness. While one type modality input source image provides a certain kind of information about the human body and some other information are provided by some other kinds of the source images. For example, dense structures information like the bones and implants with less distortion are provide by computed tomography (CT) and X-ray while physiological changes detection information requires MRI imaging, which can visualize normal and pathological soft tissue. Furthermore, the information of blood flow is provided by PET scans, which is efficient with low spatial resolution case. Therefore, image fusion technology cast a light to integrate and present the different kinds of information from two or more imaging modality into a single image, which is more appreciated by hospital. Hence, the fusion of the medical images is becoming necessary nowadays which is more suitable for human perception and diagnoses by doctors.

Numerous techniques have been proposed in past decades to deal with medical image fusion. The most classical way is to select or average the density pixel-by-pixel from the input medical source images [1-4]. The statistical or decomposition methods are also empolyed. Some typical scheme includes medical image fusion methods based on wavelet analysis [5], weighted averaging to complex multi-resolution pyramid [6] or neural network approach [7, 8]. But for image fusion, wavelet decomposition is still a popular and important one.

In this paper, a novel algorithm for medical image fusion is proposed which employ a tensor matrix to describe some important feature information. We define a patch similarity based tensor matrix. Then it is used to extract local features from low frequency and high frequency wavelet coefficients. Onthebasisof these features, an average fusion rule is established. The remaining part of this paper is organized as follows: we first introduce wavelet framework and the classical structure tensor, and then we modify the new structure tensor by using the nonlocal mean filter in Section 2. In Section 3, we present a patch based fusion approach within the multi-resolution wavelet theory. Section 4 shows some experimental results of medical image fusion with comparison with some relative methods. A brief conclusion is given in Section 5.

2. WAVELET FRAMEWORK AND PATCH BASED STRUCTURE TENSOR

2.1. Multi-resolution Wavelet Framework

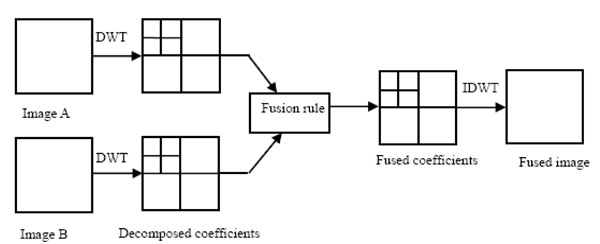

Wavelet analysis has many advantages and is an important tool for various image processing tasks, including medical image fusion. One essential step in wavelet image fusion is the combination of wavelet coefficient. Namely, the final results mainly depends on the merge of the two families coefficients derived the input source images. Fig. (1) illustrates a flowchart through which medical images are fused. The difference between different approaches lies in two aspects. The first aspect is the way to extract the features. And the second aspect is the fusion rule.

Wavelet-based image fusion framework.

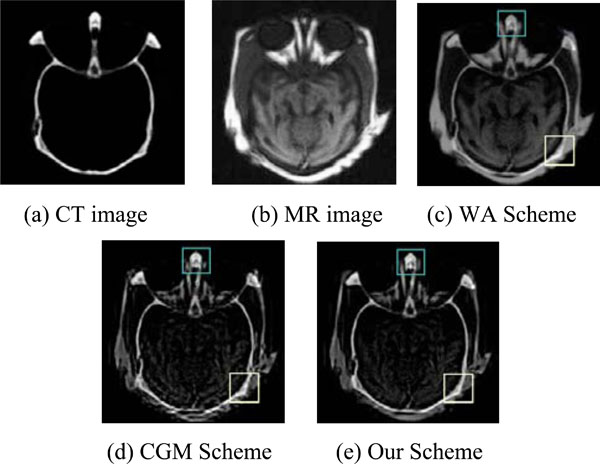

Fusion of medical images.

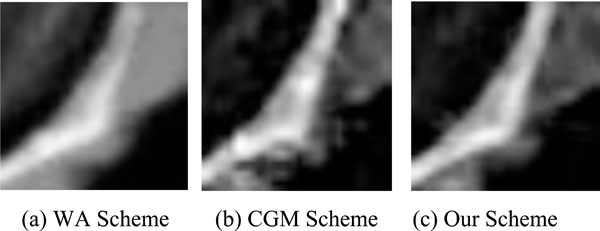

Center part of the fusion results.

Right part of the fusion results.

Comparison of the EOG data.

| WA | CGM | Our Scheme | |

|---|---|---|---|

| CT/MR | 4.0363 | 4.6583 | 5.1172 |

For two source medical images, we denote them as A and B respectively. Also the goal image of image fusion is denoted as image F. The main steps of the proposed algorithm are as follows:

- Image A and B are performed k-level discrete wavelet transform are applied two source input images A and B. After the wavelet decomposition, the high frequency and low frequency coefficients are obtained. A single sub-band is yielded for a certain level. The low-frequency sub-bands of image A is denoted for image B. There are three high frequency sub-bands for a certain level k, LH sub-band, HL sub-band and HH sub-band. We denote them as a general formation as , where j=LH,HL,HH.

- After the wavelet decomposition of two input source medical images, the low-frequency coefficients and the high-frequency coefficients are fused with a fusion rule. The feature information, which are mainly composed in high frequency coefficients, are used for fusion is very important. The corresponding fusion rule also is critical for fusion result. In the next sub-section, we will give more details. After the combination of wavelet coefficients, the low-frequency sub-bands is denoted by and the high frequency sub-bands are , j=LH,HL,HH.

- As an inverse processing of wavelet decomposition, the low-frequency and the high-frequency coefficients are used to reconstruct the fused image F by a standard discrete wavelet transform.

In the next sub-section, the way to extract feature and the fusion rule will be given.

2.2. Structure Tensor

Gradient is a very important mathematics tool for image processing. It can be used for edge detection, image segmentation and some other tasks. However, it is not robust for noise. To deal with this problem, averaging is a norm way to suppress noise. Unfortunately, the sign of gradient may be opposite, which means it can make cancellation effect [9]. To solve this problem, we introduce an alternative tool, structure tensor. For a discrete image, its two first order derivatives with respect two directions is used to construct the gradient vector , where T is the transpose. And we define the outer product of gradient vector and its transpose as the (initial) structure tensor:

It is easy to justify that is positive semi-definite matrix. After a standard computation, we can know its two eigen-values are respectively. The eigen-vector corresponding to shares the same direction withwhile the other eigen-vector is orthogonal to. A normal way to suppress noise is to apply the convolution of the components of with a Gaussian kernel (Gaussian smoothing), by which is extended to the linear structure tensor.

2.3. Nonlocal Structure Tensor

It is come to a common that classical Gaussian filter blurs and dislocates structures. The same situation happens for linear structure tensor. The main reason for this undesired effect is the weight of Gaussian filter only depends on the distance between two pixels. The weight is fixed for the fixed distance and it cannot adapt to the structure of the input data. When the filtering is applied, some important features may be smoothing away. Some improvements have been made by using some adaptive structure tensor, such as the nonlinear structure tensor in [10, 11], bilateral-based structure tensor in [12].

However, the above mentioned technologies only use the local structure information and neglect the relations between the pixels far away. The relation may be important especially when the two pixels have similar structure, even though the distance between them is far. In this case, a larger weight should be given. Therefore, we use the nonlocal means (NLM for short) filter, to construct a patch based structure tensor. The key idea of NLM is that the noised contained in an image may be smoothed away by averaging them as images contain repeated structures [13]. After computing the patch similarity, which is define as the weight sum of the difference of two patches with the same size, weight is appointed. If the structure of the neighbor is similar, the larger weight is given.

A standard NLM can be calculated as below. For a discrete noisy image, the filtered value

, is the weighted average of all the pixel in the image,

The weights satisfy the usual conditions .

The weights describe the similarity of the two pixels X and Y. The Neighborhood of a pixel is usually defined as a square window whose radius is r. These weights are calculated as

where

where h is a parameter to control filter degree. d is a scalar defined as :

where Gρ is a normalized Gaussian weighting function with zero mean and ρ standard deviation. There are two aspects that patch based filtering is prior to some classical filters such as Gaussian filter and bilateral filter. One aspect is NLM consider the information between two far away pixels. The long distance relation is exploited. The second one is that the local structure, which is contained in a local window region, is used to extract geometrical feature. In a word, the weight of NLM mainly depends on the similarity of the two patches, instead of that of two isolated pixels. Therefore, it obtain a better details-preserving denoising results. To improve the robustness of the original structure tensor, a patch based structure tensor() defined as below:

Two eigne-vectors of matrix . And two vectors are orthonormal. At the same time, they are paralled to

Two eigen-values are given by

and

Eigen-direction and eigen-value contain the important information of the local features. For example, The highest grey value fluctuations orientation is indicated by , while the coherence direction is given by implies the variation in a local region is very small and we can assert that it is a homogenous region.s implies the variation in one the main direction is strong and the variation in another eigen-vector direction is weak. A straight edges or flow-liked region shows this situation.implies that a corner may be detect. We use the definition of local coherence measure as

The idea of new structure tensor can be extended to some relative image processing tasks, as illustrated in [14].

3. MEDICAL IMAGE FUSION WITH NONLOCAL STUCTURE TENSOR

In a standard multi-resolution wavelet theory, the low-frequency sub-band LL represents the approximation part while the detail information is contained in three high-frequency sub-bands. Therefore, we devise two different strategies for these two types of coefficients fusion. Once the fused coefficients are obtained, the fused medical image can be obtained after an inverse wavelet transform.

3.1. Low-frequency Sub-band Fusion Rule

In our experiment, we apply widely used average method for low-frequency sub-band coefficients:

Where parameters k1 and k2 are fixed as k1=0.75 and k2=0.25.

3.2. The Fusion of High-frequency Sub-band

An essential step in medical image fusion is the way to combine high-frequency sub-bands. The features, such as edges and lines, produce larger coefficients. An ordinary way for fusion rule is the adaptive weighted average (WA) scheme, in which the fused high-frequency coefficients are the weighted sum of that of the source images. An alternative way is choose-max (CM) scheme, which uses directly the coefficient with the larger absolute value. In out setting, a patch based nonlocal structure tensor is used to measure local geometrical information. The eigen-values of patch based structure tensor show the local shape information, which are critical clues for fusion. Based on the discussion above, a novel medical image fusion rule is given as follows:

where is the weighted coefficients defined by

In equation (13), is the local coherence measure for a certain high sub-band in k-level decomposition of image A. The definition of is similar with that of image B.

4. EXPERIMENTAL RESULTS

To test the performance of the proposed scheme, we tested our method with CT and MR images. To do the quantitative analysis of experimental results comparison. Energy of image gradient (EOG) is used as quantitative comparison. We compare our method with two schemes: weighted average (WA) and choosing gradient max (CGM) fusion scheme.

The whole fused image is shown in Fig. (2). To further investigate the detail performance, we also give two zooming parts. In our experiments, the wavelet decomposition is applied to three methods with three levels. The wavelet basis function used is ’db3’.

Fig. (2) illustrated the fusion results of CT and MR medical images of the same brain area. The fusion results by different schemes are shown in Fig. 2(c)- Fig. 2(e). The search window is 11 × 11 and the similar window size is 5 × 5. AW scheme produces a fuzzy effect when compared with the other methods. As displayed in Fig. (3), patch based method produces a more smoothing effect for homogenous region. In Fig. (4), it is easy observed that AW scheme produces a lower contrast. Ghost occurs near edges in the fused image with CGM scheme. Our scheme preserves edges and keeps relative smooth. EOG data is reported in Table 1.

CONCLUSION

In this paper, a patch based structure tensor is defined. Then it used as a tool to extract information. The fusion rule uses adaptive weighted function of eigen-values. The proposed performances better when compared some related methods.

CONFLICT OF INTEREST

The authors confirm that this article content has no conflict of interest.

ACKNOWLEDGEMENTS

This work was supported by The Foundation of Henan Educational Committee (14A520029, 15A520070).